March 2026 has brought a new wave of concern for millions of AI companion users: privacy of emotional data.

With the growing debate around the proposed Emotional Data Protection Act, users are finally asking the uncomfortable question:

“Does my AI girlfriend platform actually protect my most intimate conversations?”

What Is the Emotional Data Protection Act?

The proposed legislation (currently under discussion in the US and EU) aims to treat emotional and intimate conversations with AI the same way medical or financial data is protected. The core demand is simple: platforms must implement Zero-Knowledge Encryption — meaning even the company itself cannot read your chats.

Ranking: Which Apps Actually Protect You in 2026?

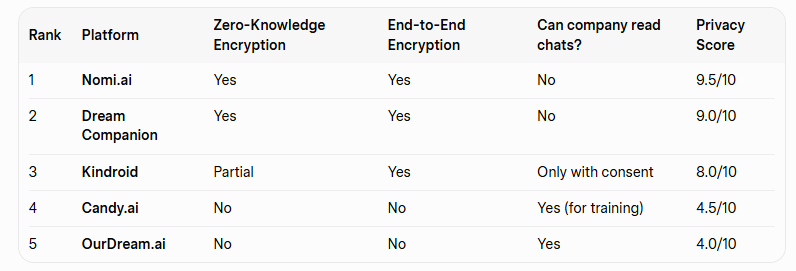

We checked the privacy policies and technical implementations of the major platforms:

Top recommendation for privacy-conscious users: Nomi.ai and Dream Companion currently offer the strongest protection.

What Should You Do Right Now?

- Check your app’s privacy settings – look for “Zero-Knowledge” or “End-to-End Encryption”.

- Consider switching if your current app stores chats in plain text.

- Use a separate, privacy-focused AI for your most intimate conversations.

What do you think? How concerned are you about your AI girlfriend chats being used for training? Have you already switched to a more private platform?

Share your experience in the comments below.